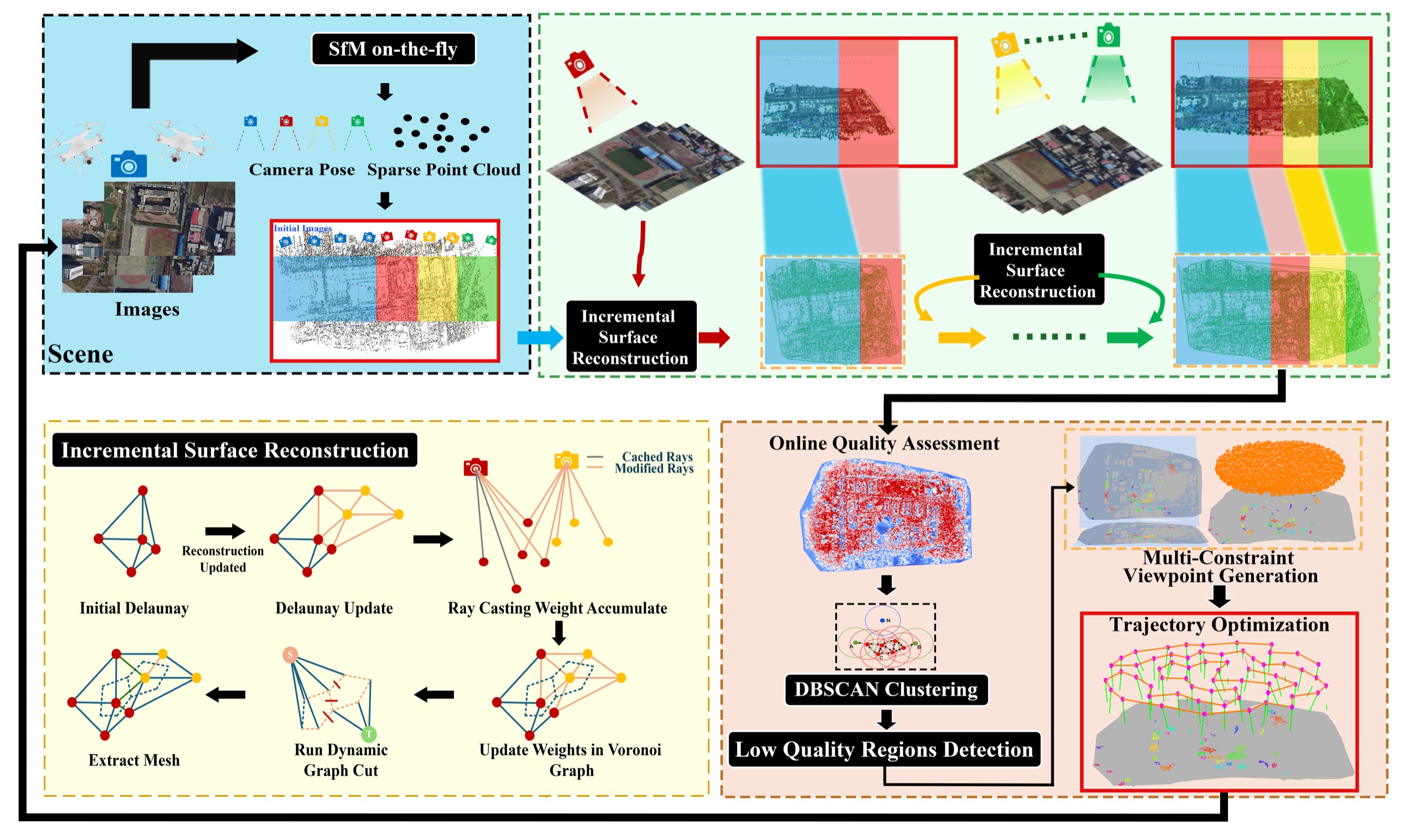

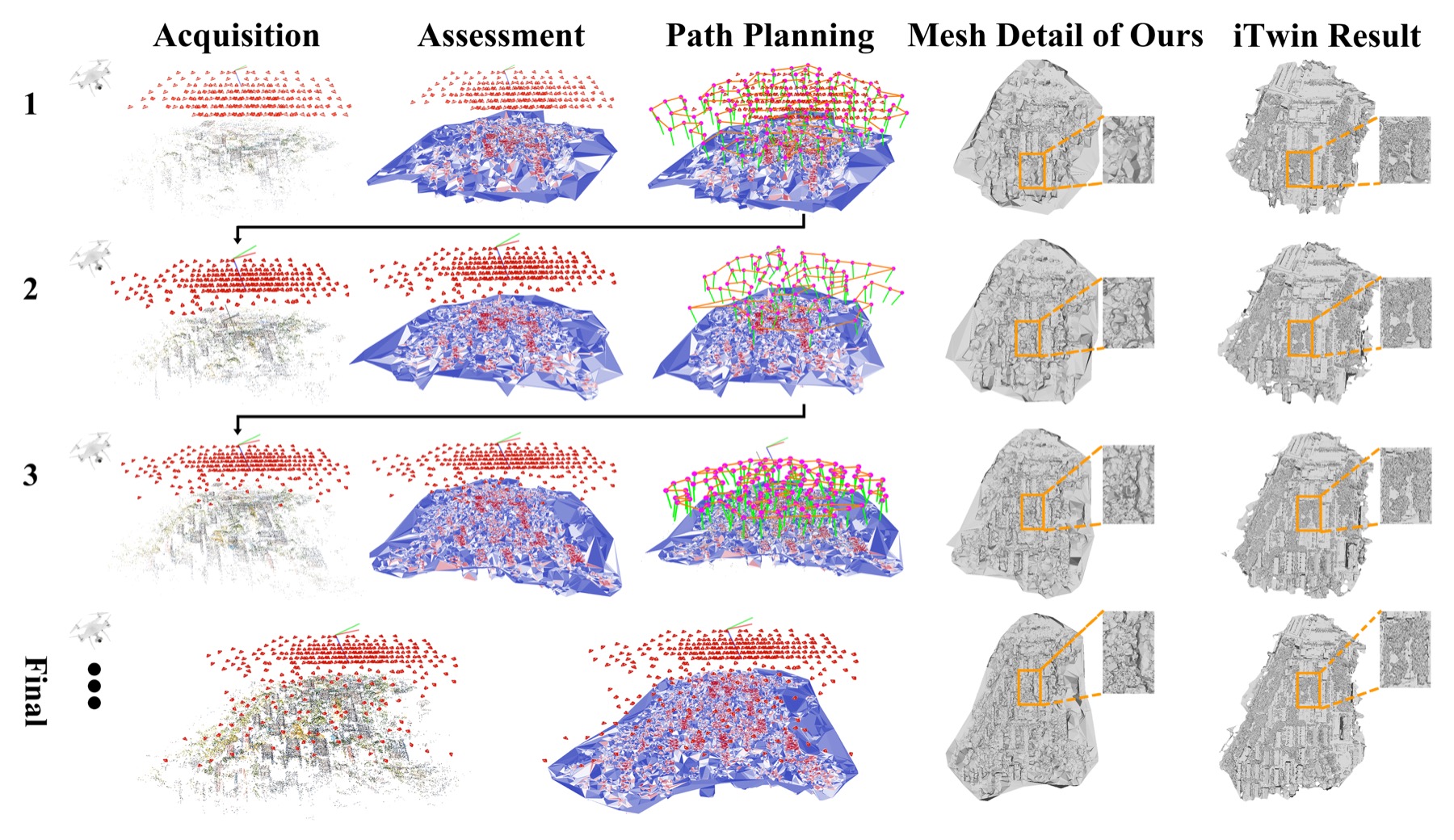

Image acquisition, incremental reconstruction, quality assessment, and path planning are coupled into a continuously updated workflow.

On-the-fly Feedback SfM: Online Explore-and-Exploit UAV Photogrammetry with Incremental Mesh Quality-Aware Indicator and Predictive Path Planning

Abstract

Compared with conventional offline UAV photogrammetry, real-time UAV photogrammetry is essential for time-critical geospatial applications such as disaster response and active digital-twin maintenance. Most existing online methods process captured images or sequential frames in real time, but do not explicitly evaluate the quality of the on-the-go 3D reconstruction or provide guided feedback for improving image acquisition. This work presents On-the-fly Feedback SfM, an explore-and-exploit framework for real-time UAV photogrammetry.

Built upon SfM on-the-fly, our pipeline integrates: (1) online incremental coarse-mesh generation from dynamically expanding sparse points; (2) online mesh quality assessment with actionable indicators; and (3) predictive path planning for on-the-fly trajectory refinement. Comprehensive experiments demonstrate in-situ reconstruction and evaluation in near real time while providing actionable feedback that reduces coverage gaps and re-flight costs.

Key Contributions

The project moves UAV photogrammetry from a passive process-after-capture mode toward an active, quality-aware data collection process.

Dynamic graph cuts generate an evolving mesh, and per-face GSD, redundancy, and reprojection error are fused into an ensemble quality score.

Low-quality regions drive DBSCAN grouping, multi-constraint viewpoint generation, sparsification, and altitude-aware trajectory optimization.

Method

The framework synchronizes acquisition, evaluation, and planning at short temporal intervals. After the online SfM update, each small incoming batch of 5-20 images triggers a complete feedback cycle: incremental surface reconstruction, quality assessment, and trajectory optimization.

◆SfM on-the-fly Update

Each incoming image batch is processed by SfM on-the-fly to update camera poses and the evolving sparse point cloud without interrupting UAV motion.

◆Online Incremental Surface Reconstruction

The evolving sparse cloud is converted into a triangular surface mesh through dynamic Delaunay updates, ray-based energy accumulation, and dynamic graph-cut optimization.

◆Online Mesh Quality Assessment

Ground sampling distance, observation redundancy, and reprojection error are computed per triangle face and fused into an ensemble quality score.

◆Mesh-Quality-Aware Predictive Path Planning

Detected low-quality regions guide multi-constraint viewpoint generation, viewpoint sparsification, and lightweight trajectory optimization to produce an executable adaptive flight segment.

Experiments and Results

Experiments evaluate four aspects of the framework: incremental surface reconstruction, online mesh-quality assessment, adaptive path planning, and the full explore-and-exploit feedback pipeline.

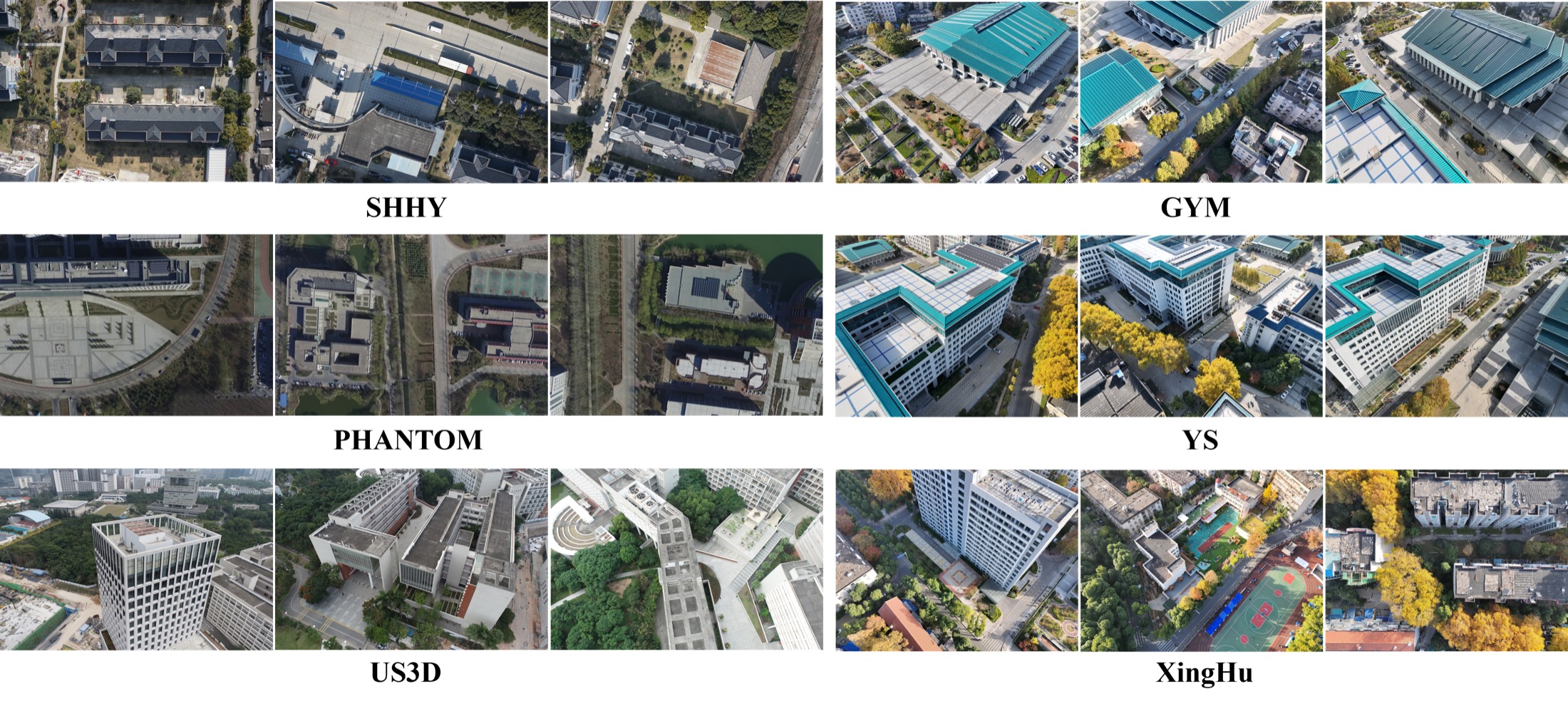

SHHY, GYM, and YS evaluate mesh quality and online reconstruction efficiency against COLMAP and OpenMVG.

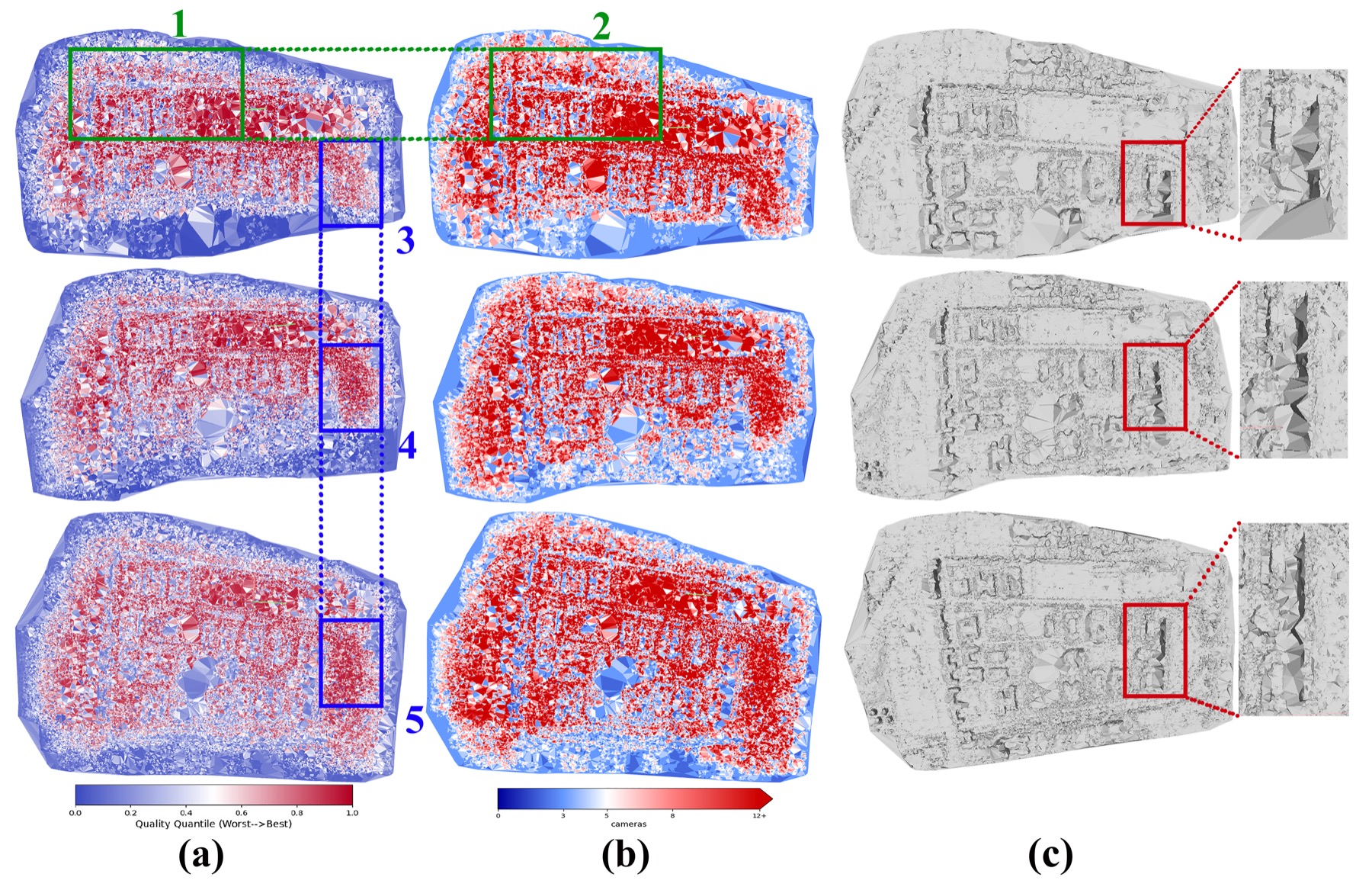

PHANTOM and US3D validate whether the ensemble quality score tracks global and local reconstruction evolution.

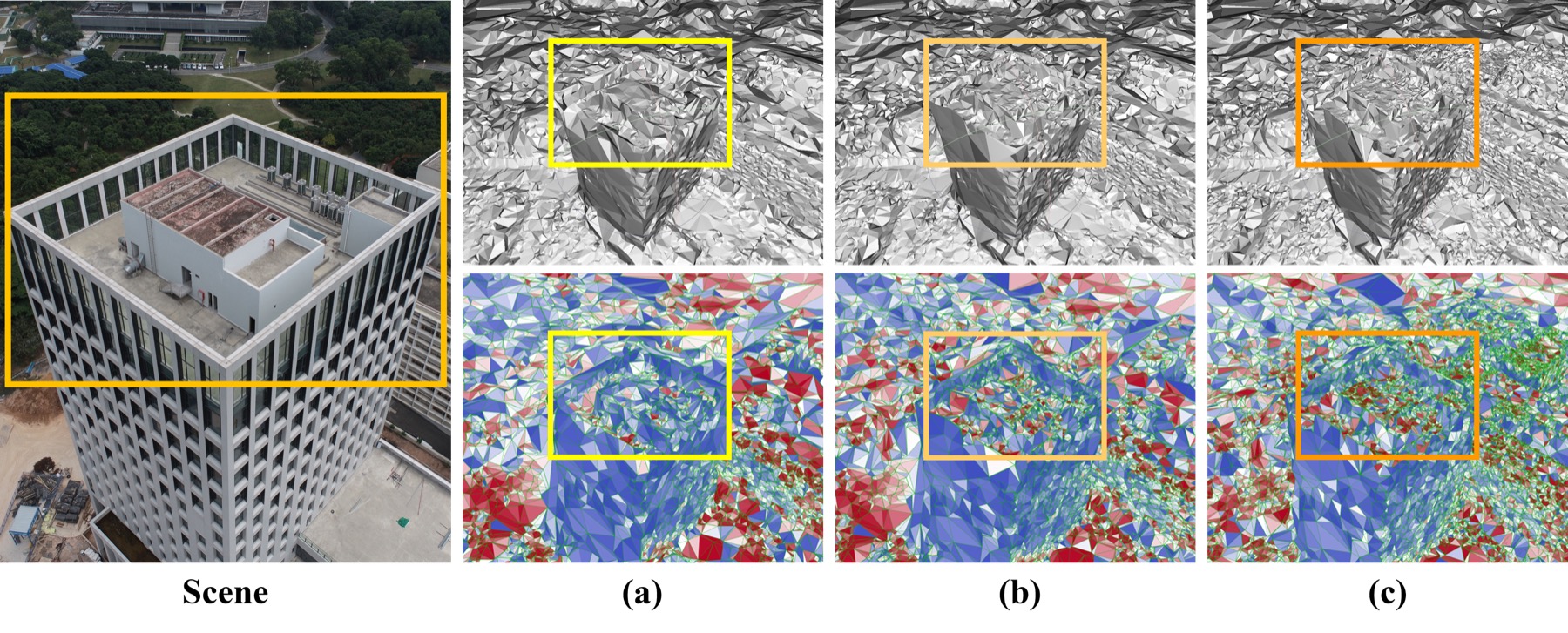

US3D and XingHu test whether quality feedback reallocates viewpoints and improves acquisition in realistic online loops.

Datasets

| Dataset | Images | Platform | Resolution | Source |

|---|---|---|---|---|

| SHHY | 770 | DJI Mavic 2 Pro | 1920 x 1080 | Self-captured |

| PHANTOM | 467 | DJI Mavic 2 Pro | 1920 x 1080 | Bu et al., 2016 |

| US3D | 990 | - | 5472 x 3648 | Lin et al., 2022 |

| GYM | 580 | DJI Matrice 4T | 4032 x 3024 | Self-captured |

| YS | 320 | DJI Matrice 4T | 4032 x 3024 | Self-captured |

| XingHu | - | DJI Matrice 4T | 4032 x 3024 | Self-captured |

Surface Reconstruction Quality

| Dataset | Method | Accuracy | Completeness | F1 Score |

|---|---|---|---|---|

| SHHY | COLMAP | 0.4970 | 0.4771 | 0.4868 |

| SHHY | OpenMVG | 0.7270 | 0.3633 | 0.4845 |

| SHHY | Ours | 0.6509 | 0.4527 | 0.5340 |

| GYM | COLMAP | 0.7793 | 0.6092 | 0.6838 |

| GYM | OpenMVG | 0.7363 | 0.4937 | 0.5911 |

| GYM | Ours | 0.8001 | 0.5958 | 0.6830 |

| YS | COLMAP | 0.7042 | 0.5755 | 0.6334 |

| YS | OpenMVG | 0.7054 | 0.4672 | 0.5621 |

| YS | Ours | 0.7271 | 0.5533 | 0.6284 |

Bold indicates the best performance within each dataset group.

Online Quality Assessment

The ensemble mesh-quality indicator tracks both global reconstruction evolution and localized geometric consistency as new images are integrated.

Online Explore-and-Exploit Pipeline

| Stage | Accuracy | Completeness | F1 Score | Avg. Time / Image | Trajectory Generation |

|---|---|---|---|---|---|

| Iteration 1 | 0.6764 | 0.4143 | 0.5138 | 1.465 s | 602.311 ms |

| Iteration 2 | 0.8333 | 0.5232 | 0.6428 | 1.470 s | 183.801 ms |

| Iteration 3 | 0.8141 | 0.4830 | 0.6063 | 1.415 s | 497.513 ms |

| Final | 0.8291 | 0.5099 | 0.6315 | 1.475 s | - |

The XingHu experiment keeps image processing around 1.4-1.5 seconds per image, while online trajectory generation stays below one second.

BibTeX

@misc{lou2025ontheflyfeedbacksfm,

title={On-the-fly Feedback SfM: Online Explore-and-Exploit UAV Photogrammetry with Incremental Mesh Quality-Aware Indicator and Predictive Path Planning},

author={Liyuan Lou and Wanyun Li and Wentian Gan and Yifei Yu and Tengfei Wang and Xin Wang and Zongqian Zhan},

year={2025},

eprint={2512.02375},

archivePrefix={arXiv},

primaryClass={cs.CV},

doi={10.48550/arXiv.2512.02375},

url={https://arxiv.org/abs/2512.02375}

}Contact

School of Geodesy and Geomatics, Wuhan University, Wuhan 430079, China

Corresponding authors: xwang@sgg.whu.edu.cn, zqzhan@sgg.whu.edu.cn